Table of Links

-

Related Work

2.1 Semantic Typographic Logo Design

-

3.1 General Workflow and Challenges

-

Discussion

8.1 Personalized Design: Intent-aware Collaboration with AI

8.2 Incorporating Design Knowledge into Creativity Support Tools

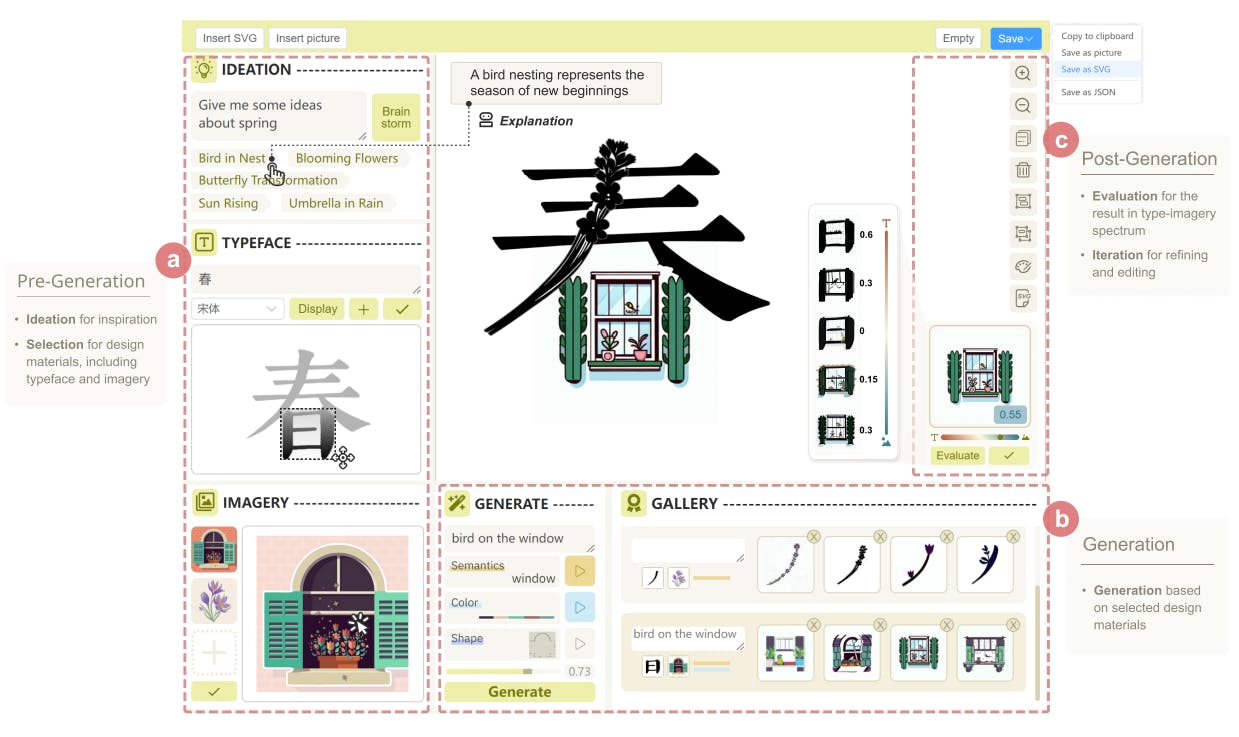

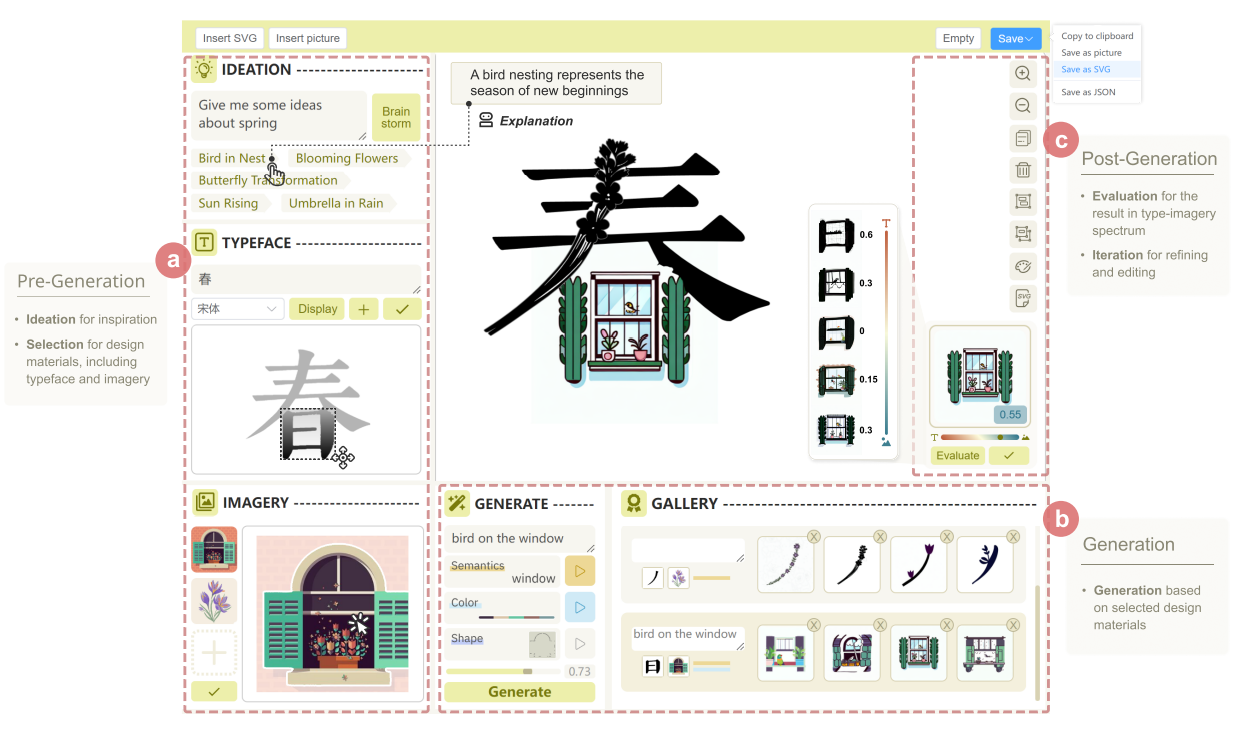

6 INTERFACE WALKTHROUGH

6.1 Pre-generation stage

6.2 Generation stage

6.2.2 Regenerating with appropriate strength. Recognizing the generated result is closer to the typeface, she deletes unwanted results and adjusts the design factor strength to 0.86 using the slider. In the subsequent round, she finds a desirable result and clicks it. The chosen design is then displayed in the central canvas.

6.3 Post-generation stage

6.3.1 Evaluating and Refining the generated result. To assess its legibility, Alice navigates to the right side of the canvas and clicks the [Evaluation] button in the EVALUATION. The current position of the result is situated on the imagery side of the slider with a value of 0.55. However, aiming to explore positions more aligned with the typeface side, she drags the slider to the left. After several trials, Alice obtains a series of results, as shown in Fig. 5.

Authors:

(1) SHISHI XIAO, The Hong Kong University of Science and Technology (Guangzhou), China;

(2) LIANGWEI WANG, The Hong Kong University of Science and Technology (Guangzhou), China;

(3) XIAOJUAN MA, The Hong Kong University of Science and Technology, China;

(4) WEI ZENG, The Hong Kong University of Science and Technology (Guangzhou), China.

This paper is